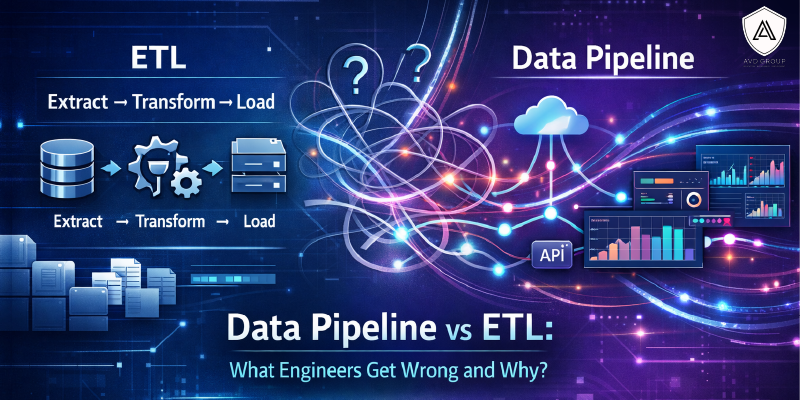

Data Pipeline vs ETL: What Engineers Get Wrong and Why?

ETL and data pipelines often get treated as the same thing. They’re not, and that mix-up matters more than it seems. A lot of data systems get built on that confusion, and fixing them later is never fun. It’s one of those foundational gaps that data engineer classes in Pune address early on, and for good reason.

What’s the Actual Difference Between a Data Pipeline and ETL in Real-World Projects?

In practice, the difference shows up the moment a project gets complicated.

ETL follows a strict order. Extract the data, clean it up, then load it somewhere. Transformation always happens before the data lands anywhere. It works well for structured data and scheduled jobs where quality needs to be locked down first.

Data pipelines are more flexible. They move data in real time, can load raw data first and transform it later, pull from multiple sources at once, and deliver data to different destinations in different formats. That kind of flexibility is what modern data environments usually need.

Here’s where engineers get tripped up in real projects:

- Applying ETL to real-time data, which slows things down in places where speed actually matters

- Pulling entire datasets every day instead of just the new or changed data, which burns through time and money unnecessarily

- Building something that works in testing but breaks the moment real conditions hit, like data arriving late or volumes spiking unexpectedly

- Not monitoring after launch, so the first sign that something broke is a dashboard showing numbers that stopped updating days ago.

- Not accounting for reruns, so when something fails and needs a rerun, it creates duplicates instead of fixing the original problem.

Why Do So Many Beginners Confuse Data Pipelines With ETL, Even After Training?

ETL came first. For a long time, it was the standard way data got moved and prepared for analysis. It worked well with structured data, manageable volumes, and waiting overnight for a report was acceptable. When data pipelines came along, many people assumed they were just a new name for the same thing. They aren’t the same thing.

Think of ETL as a single assembly line in a factory. It does one job, follows a fixed sequence, and does it well. A data pipeline is the entire factory. Multiple assembly lines running at once, different material handling, finished products going to different places, all without stopping.

ETL is a type of data pipeline. A data pipeline is not just an upgraded ETL. That difference matters more than most people realise.

Read This Blog: A Beginner’s Guide to Data Engineering for Non-Tech Professionals

Are Modern Data Roles Moving Beyond Traditional ETL Toward Full Pipeline Architecture?

Yes, and it’s happening faster than most people realise.

Nobody wants to wait until tomorrow for data they need today. If a bank needs to catch a suspicious transaction, it can’t wait for a nightly report. If an online store wants to show you products you might like, it needs to know what you just clicked, not what you clicked last week.

ETL still works in certain situations. Some industries have strict rules about how data gets handled before it goes anywhere, and in those cases, ETL makes sense. It’s not going away completely.

For most businesses, though, that’s not enough anymore. They need data moving constantly, coming from multiple places, going to multiple places, and updating in real time. That’s what a full data pipeline does.

Do Data Engineer Classes in Pune Explain When to Use ETL vs a Full Data Pipeline?

Good programmes do, and that’s what sets them apart. Reading about ETL and data pipelines is one thing. Actually working through a real problem and deciding which one fits is where the learning happens.

The best programmes put you in situations where you have to make that call. That’s what builds judgment, and judgment is what the job runs on. A few things worth getting familiar with, regardless of where you are in your learning:

- The difference between batch processing and real-time streaming, and when each one makes sense

- What ELT is and why it’s become more common in cloud environments

- How orchestration tools keep pipeline tasks running in the right order at the right time

- What data observability means and why monitoring a pipeline matters as much as building it

- How governance works when data moves across different teams or has to meet regulatory requirements

Which Data Engineer Classes in Pune Focus More on Practical Pipeline Building Instead of Just ETL Theory?

This question is worth asking before committing to any programme. A curriculum built around outdated tools will give you some foundation, but it won’t prepare you for what real projects and interviews actually look like today.

Practical pipeline building means working with streaming frameworks, not just batch jobs. Designing for failure is part of it. So is treating governance and data quality as built-in requirements, rather than things you bolt on later. AVD Group’s data engineering classes in Pune are designed with that in mind: current tools, real-world scenarios, and less time on theory that doesn’t translate to the job.

The Takeaway

ETL is not obsolete. It still has a place. The mistake isn’t using it; it’s not knowing when to use it and when not to.

Data engineering classes in Pune with AVD Group cover both approaches, the tools, and real-world situations where one makes more sense than the other. If you’re serious about building a career in data engineering, reach out to us at info@avd-group.in and sign up!

Frequently Asked Questions

- What is the primary difference between Data Pipelines and ETL?

Data pipelines move and process data in real time, whereas ETL is more about pulling data in batches, transforming it, and loading it into a specific destination. - Can Data Pipelines handle unstructured data?

Yes, data pipelines are pretty flexible that way. They can handle structured, semi-structured, and unstructured data, so you’re not limited to clean, neatly formatted datasets. - What are the emerging trends in Data Pipelines and ETL processes?

Cloud-native setups, serverless computing, and AI integration are the big ones right now. Teams are moving toward architectures that are faster, more scalable, and smarter about how they process and analyse data.